Meta has released Muse Spark, a compact world model designed to help robots understand and predict physical interactions in their environment. The model, announced alongside a broader initiative called Meta Spatial Learning, represents the company's latest push into embodied AI and continues Mark Zuckerberg's strategy of open-sourcing foundational AI tools.

What Muse Spark Actually Does

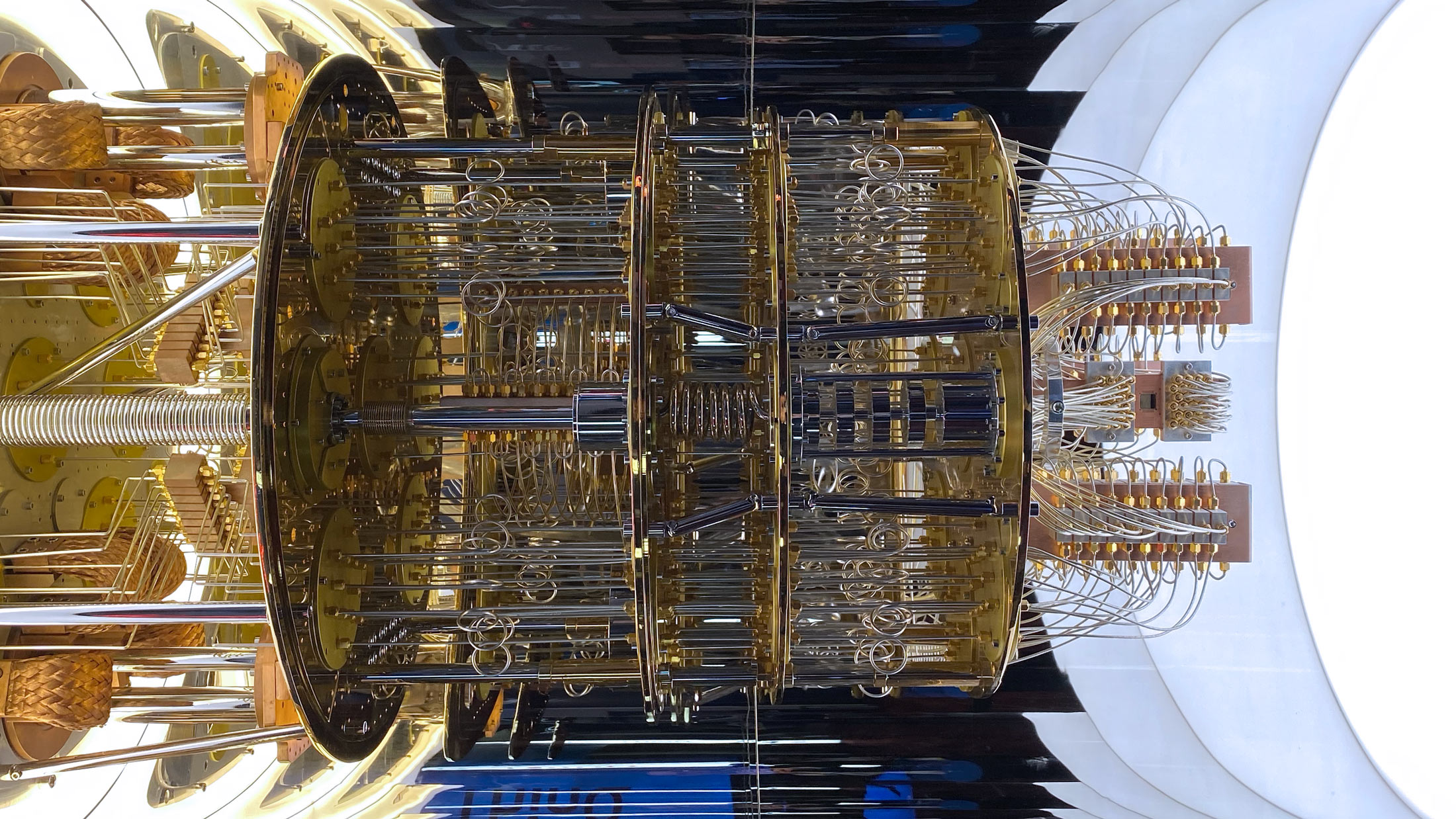

World models are AI systems that build internal representations of how the physical world works. Rather than reacting to stimuli frame by frame, a robot equipped with a world model can anticipate what will happen next. If a cup is pushed toward the edge of a table, the model predicts the fall before it happens.

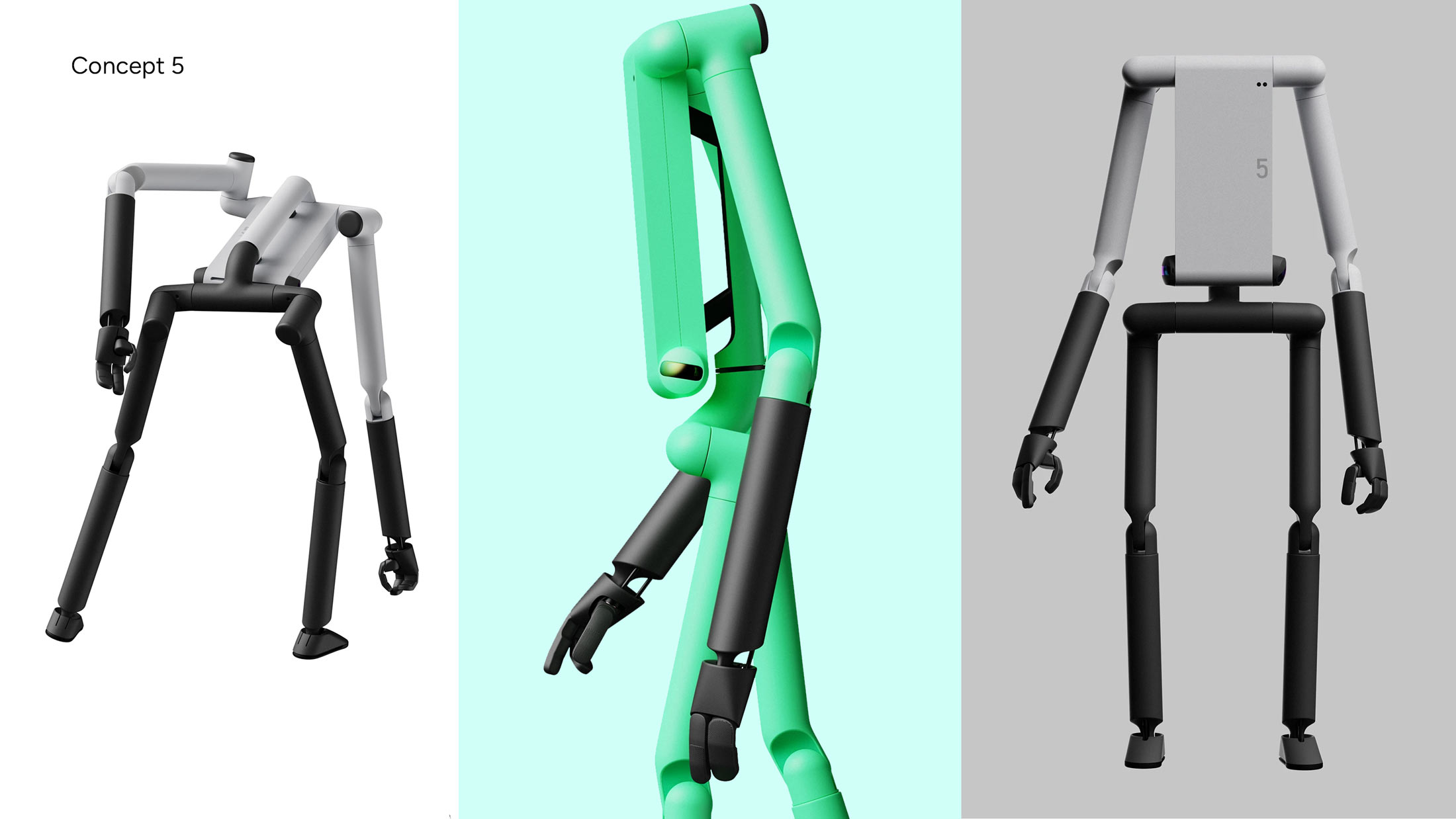

Muse Spark is trained to generate plausible future video frames based on current visual input and proposed actions. This gives robots a form of imagination, the ability to simulate outcomes before committing to a movement. The approach differs from traditional reinforcement learning, which typically requires millions of trial-and-error interactions to learn basic tasks.

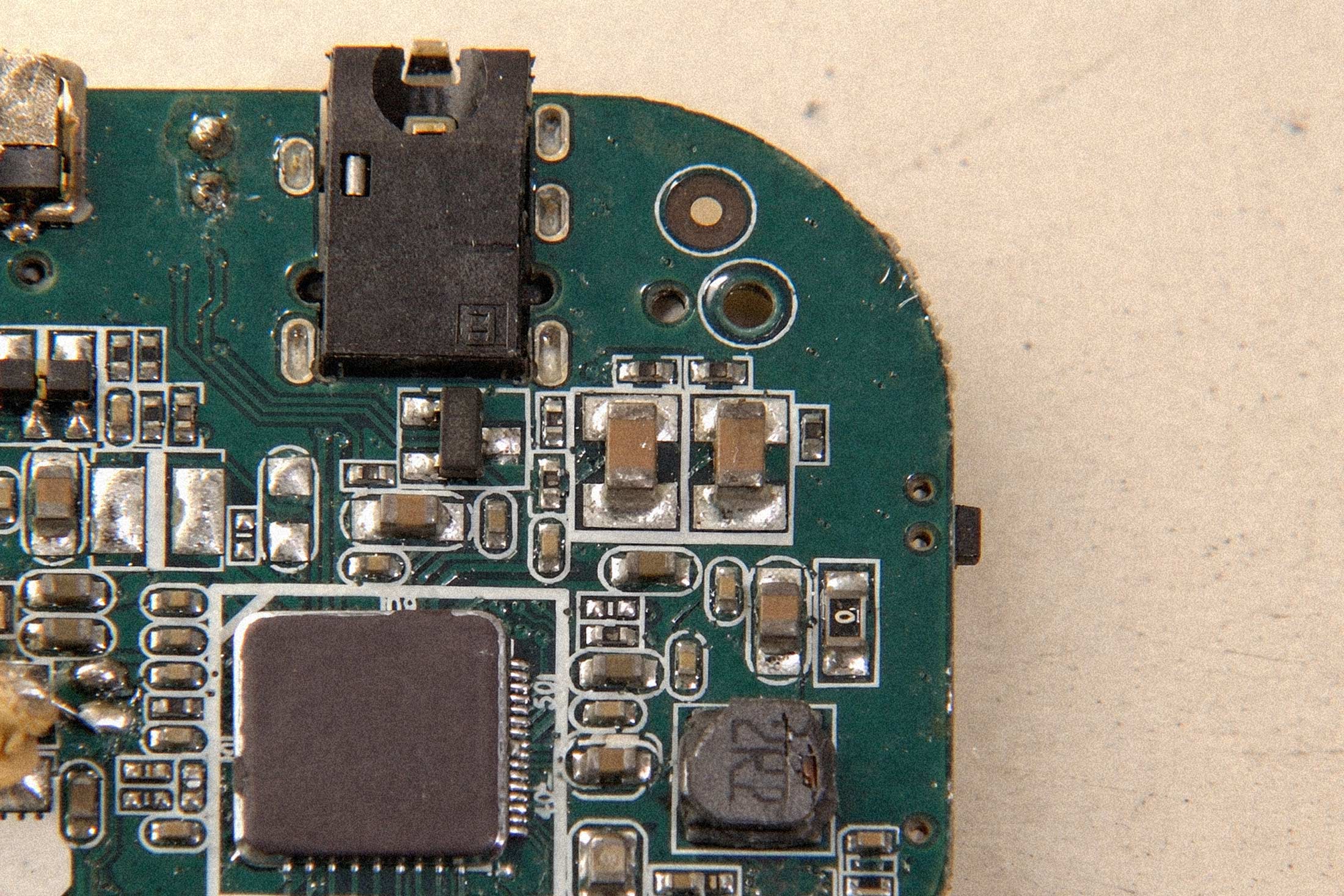

The model comes in a distilled form designed to run efficiently on edge devices. Meta claims it can operate in real-time on hardware comparable to what you'd find in consumer robotics platforms. That's a meaningful constraint. Most capable AI models require cloud inference, which introduces latency and dependency on connectivity. A world model that runs locally could enable robots to operate in environments where network access is unreliable or nonexistent.

The Meta Spatial Learning Framework

Muse Spark is part of a larger initiative Meta is calling Meta Spatial Learning, or MSL. The framework includes datasets, benchmarks, and training protocols aimed at accelerating research in embodied AI. Meta has released the model weights and code under a permissive license, following the pattern established with Llama.

The dataset used to train Muse Spark draws from video of humans and robots manipulating objects, combined with synthetic environments rendered in simulation. This hybrid approach addresses one of the persistent challenges in robotics research: collecting enough high-quality real-world data is expensive and slow, but purely synthetic training often fails to generalize to actual physical environments.

Meta's researchers claim the model shows strong transfer learning capabilities, performing reasonably well on manipulation tasks it wasn't explicitly trained for. If those claims hold up under independent testing, it would represent a genuine step forward. The robotics industry has long struggled with systems that work well in controlled demos but fail in novel conditions.

Why Zuckerberg Keeps Giving Things Away

The strategic logic behind Meta's open-source approach has become clearer over the past two years. By releasing powerful AI tools freely, Meta cultivates an ecosystem of developers and researchers who build on its stack. This creates switching costs, attracts talent, and generates feedback that improves Meta's own products. The company doesn't need to sell AI directly if AI adoption drives engagement with its core platforms.

For robotics specifically, Meta has no hardware business to protect. Unlike companies building physical robots, Meta can afford to commoditize the software layer. If Muse Spark becomes widely adopted, it strengthens Meta's position as an infrastructure provider without requiring the company to manufacture anything.

The timing also matters. Meta has been aggressively expanding its AI research footprint, and robotics represents one of the few domains where foundation models haven't yet been decisively won. Google, OpenAI, and various well-funded startups are all pursuing similar world model approaches. By releasing early and openly, Meta positions itself as a default starting point for researchers who might otherwise build on proprietary alternatives.

Practical Limitations

World models remain an immature technology. Predicting physical dynamics from video is computationally intensive and error-prone. Small prediction mistakes compound rapidly, making long-horizon planning unreliable. Muse Spark addresses some efficiency concerns, but the fundamental accuracy challenges persist.

There's also the question of safety. A robot that can imagine consequences is useful. A robot that imagines incorrectly and acts on those predictions could cause real harm. The gap between research capability and deployment-ready systems remains substantial in robotics, and open-sourcing powerful models doesn't automatically solve for the integration challenges that make real-world deployment difficult.