Quantum computing has a ceiling, and almost all of it is made of noise.

Every roadmap from every major player eventually runs into the same wall. You can fabricate more qubits. You can improve gate fidelities by fractions of a percent per year. You can stack error correction on top of error correction. But the reason a machine with a thousand physical qubits struggles to reliably run a circuit that a classical laptop handles in milliseconds is that quantum information is fragile in a way classical bits simply are not, and the fragility has a name: decoherence. Stray electromagnetic fields, thermal fluctuations in the substrate, crosstalk from neighboring qubits, two-level defects in the oxide layers of the chip itself. All of it adds up, and all of it degrades the delicate phase relationships that make a quantum computer quantum in the first place.

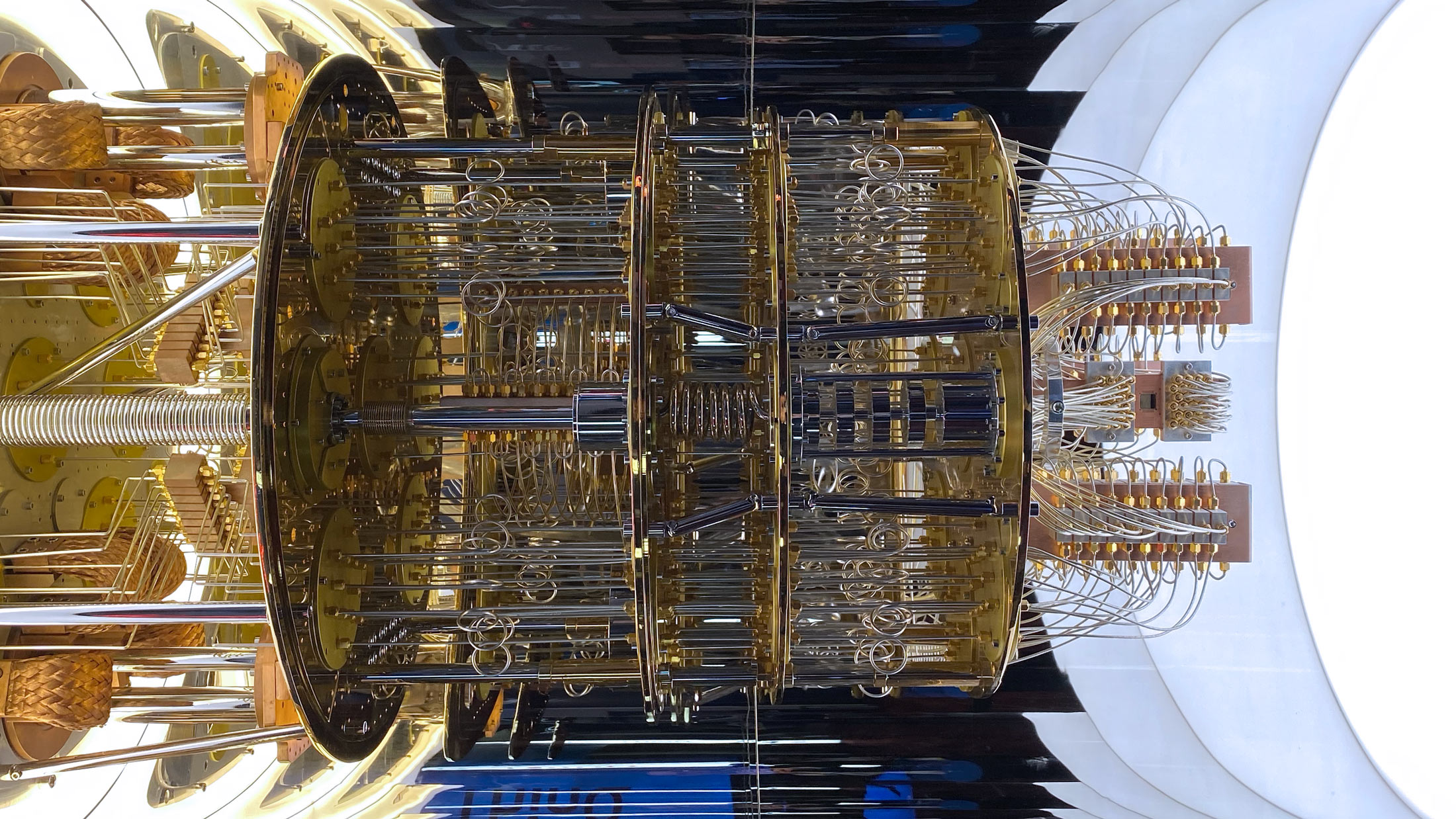

The standard response to this problem has been to treat noise the way a recording engineer treats hiss. You shield against it. You cool the system to within a fraction of a degree of absolute zero. You isolate qubits from each other during idle periods. You apply error correction codes that use many physical qubits to encode one logical qubit, buying reliability through sheer redundancy. The assumption underneath all of this work is that noise is a scalar quantity, something you have more or less of, and that every increment of environmental activity is another increment of damage. Quieter is better. Colder is better. Less interaction is better. Full stop.

That assumption is starting to look incomplete.

Where the picture breaks

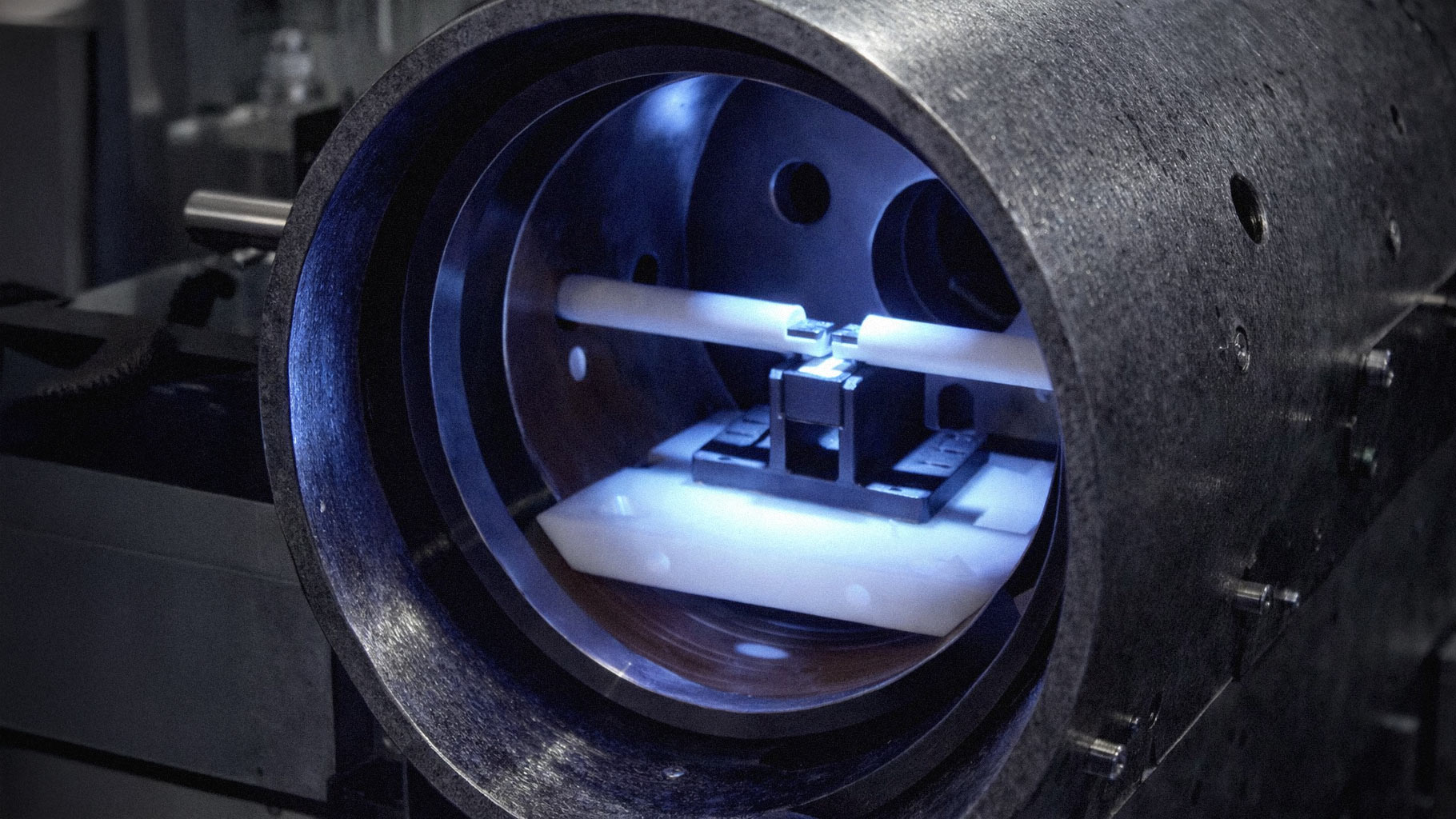

Over the past couple of years, a scattering of experimental probes on superconducting hardware have produced results that the standard noise model has a hard time explaining. The setups are not exotic. They use commercially available processors, ordinary control pulses, and the kind of protocols any university lab can run. What makes them interesting is what happens when the experimenters stop trying to minimize activity around a target qubit and start deliberately cranking it up.

In one class of test, a single qubit is prepared in an excited state and then surrounded by a buffer of neighboring qubits that get driven with rapid bit-flip operations. Textbook physics predicts a simple outcome. As the drive rate climbs, crosstalk and local heating should bleed energy into the target, and its lifetime should shorten. For low drive rates, that is roughly what happens. Then the curve does something it is not supposed to do. Past a threshold somewhere in the tens of megahertz, the target qubit's lifetime stops getting worse and starts getting better. At the highest drive rates, it outlives the baseline case where the neighbors were left alone.

A related probe looks at what happens to entangled pairs under the same kind of treatment. Two qubits are prepared in a Bell state, a buffer region between them is driven hard, and then the experimenters measure both the local coherence of each qubit and the non-local correlations that make the pair entangled in the first place. If noise were the undifferentiated fluid the standard model treats it as, both quantities should decay together. They do not. The local states hold up, sometimes better than baseline. The non-local phase correlations collapse almost immediately. Same drive, same geometry, two completely different fates for two different kinds of quantum information.

Neither result fits comfortably inside the assumption that noise is a uniform thing that gets monotonically worse with activity. Both suggest that the environment around a qubit has structure the field has been ignoring, and that the structure can be driven, saturated, or steered in ways that depend on the details of how you prod it.

What this would mean if it holds up

The right caveat belongs up front. These results are early. Sample sizes are small. The most dramatic interpretations, including speculation about finite information bandwidths in the vacuum itself, are nowhere near accepted physics and may never be. Replication on different hardware platforms (trapped ions, neutral atoms, other superconducting architectures) is the obvious next step and has not yet happened at scale. It is entirely possible that some or all of the observed anomalies will turn out to be artifacts of specific pulse calibrations or device quirks.

With that caveat loud and clear, the engineering implications of even a weak version of these findings are serious enough to take seriously now.

Current quantum chip design treats idle qubits as liabilities. They leak, they couple to their neighbors in uncontrolled ways, they introduce crosstalk, and a great deal of design effort goes into parking them in configurations that minimize their influence on whatever computation is happening. If it turns out that driven neighbors can actively shield a target qubit under the right conditions, that entire posture inverts. The unused qubits on a chip become a resource. A layer of them surrounding a computational region could function as a programmable buffer, something closer to an active noise-cancellation system than a passive hazard to be managed.

Current error correction schemes implicitly assume that local coherence and entanglement degrade together, and that protecting one protects the other. If there are operating regimes where the two can be decoupled, where a chip can be tuned so that local states are preserved while certain kinds of non-local correlations are preferentially destroyed or vice versa, then some correction strategies are spending their redundancy budget in the wrong places. Knowing that the two failure modes can be separated would let designers build codes that target whichever mode is actually dominant in a given regime, instead of treating the whole noise environment as a single undifferentiated threat.

Current fabrication priorities put enormous weight on reducing coupling between qubits, because coupling is the channel through which crosstalk propagates. If coupling can also be used to propagate protection, the optimization target shifts. You no longer want the smallest possible coupling. You want the coupling that puts you on the right part of a non-monotonic curve, and that curve has to be mapped before you can design for it.

None of these changes are small. They touch layout, control electronics, pulse scheduling, calibration procedures, and the assumptions baked into the compilers that turn high-level quantum circuits into hardware instructions. A field that has spent twenty years optimizing for a passive, additive noise model would have to rebuild a fair amount of its tooling around a model in which the environment talks back.

Why it matters now

Quantum computing is at an awkward point in its development. The hardware has gotten good enough to run genuinely interesting experiments, but not good enough to deliver the commercial applications that have been promised for a decade. The bottleneck is almost entirely about error rates, and error rates are almost entirely about noise. Every proposed path to useful fault-tolerant machines depends on driving physical error rates down far enough that error correction can take over and do the rest. The field has been grinding out those improvements incrementally, and the grind is slow because the underlying model treats the environment as something to be fought rather than used.

If the probes described above hold up, even partially, they suggest the grind has been missing a whole axis of improvement. The fix would not look like a better shielding material or a colder fridge. It would look like a reframing of what the environment around a qubit actually is and what it can be made to do. That kind of reframing does not come along often in hardware engineering, and it is worth paying close attention to when it does, even at the stage where the evidence is preliminary and the interpretations are contested.

The experiments need to be replicated. The mechanisms need to be nailed down. The theorists need to catch up with whatever the hardware is actually showing. But the possibility that the field has been wrong about something foundational, and that correcting the mistake could loosen the constraint that has been holding quantum computing back from its promises, deserves more attention than it is currently getting.