There's a persistent misconception about quantum computing that goes something like this: once you have a working quantum computer, you can scale it by connecting more of them together. The logic seems reasonable. It's how we built the internet, how data centers work, how supercomputers operate. But quantum systems don't follow these rules. The physics that makes them powerful also makes them extraordinarily difficult to scale.

When Google, IBM, or any other company announces a new qubit count, the number itself tells you almost nothing about capability. A 1,000-qubit machine isn't necessarily more useful than a 100-qubit machine. It might actually be worse. The reason comes down to a property that has no real analog in classical computing: coherence.

The Noise Floor Problem

Qubits exist in superposition states that encode information through quantum mechanical properties. These states are fragile. Environmental interference, thermal fluctuations, electromagnetic noise, even cosmic rays can cause qubits to lose their quantum properties in a process called decoherence. Once a qubit decoheres, its computational value collapses.

The challenge is that adding more qubits doesn't just add more computational power. It adds more sources of noise, more interactions that can go wrong, more opportunities for errors to cascade through the system. The scaling problem isn't about size. It's fundamentally about maintaining coherence across an increasingly complex system.

This is why you can't simply network quantum computers together like classical machines. Classical bits are robust. You can copy them, transmit them, store them indefinitely. Quantum states cannot be copied due to the no-cloning theorem. They cannot be measured without destroying the superposition. Transmitting them requires exotic techniques like quantum teleportation, which itself requires pre-shared entanglement and classical communication channels.

What Functional Scaling Actually Requires

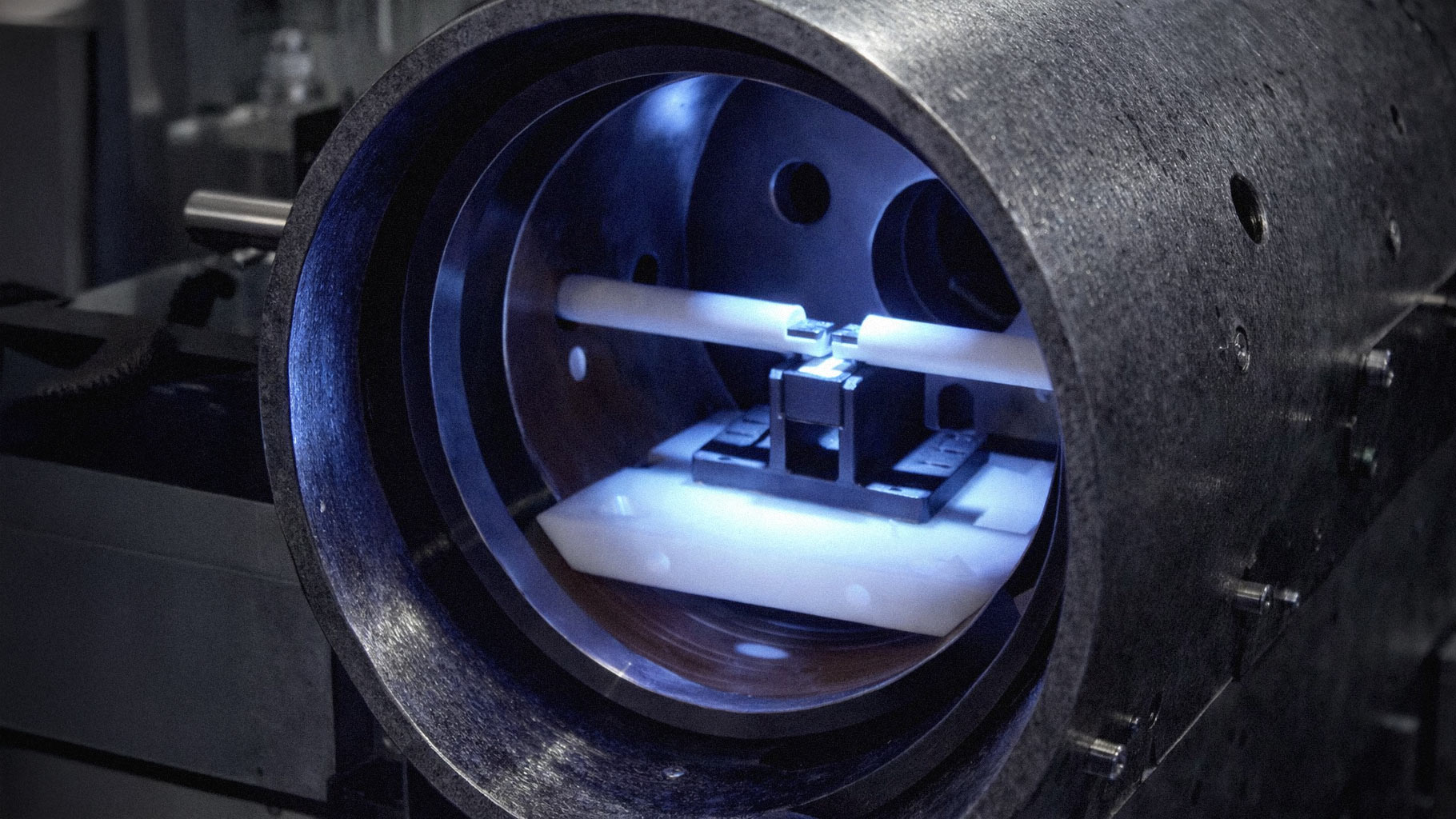

A useful quantum computer needs several things working simultaneously. First, it needs qubits with long coherence times, meaning they can maintain their quantum states long enough to complete calculations. Current superconducting qubits from companies like IBM have coherence times measured in microseconds. That's not much runway for complex algorithms.

Second, it needs high-fidelity gates. Every operation you perform on a qubit introduces some probability of error. If your two-qubit gate has 99% fidelity, that sounds impressive until you realize that after 100 operations, errors have almost certainly corrupted your computation. Scaling to thousands of qubits means millions of gate operations, which requires fidelity numbers far beyond what exists today.

Third, and most critically, it needs quantum error correction. This is where the math gets brutal. To create one logical qubit that's protected from errors, you need many physical qubits working together in carefully orchestrated patterns. Estimates vary, but achieving fault-tolerant computation might require 1,000 to 10,000 physical qubits per logical qubit. That means a quantum computer capable of running Shor's algorithm to break encryption might need millions of physical qubits.

The Hardware Stack Nobody Talks About

NVIDIA has positioned itself in the quantum space through classical computing that controls and simulates quantum systems. But even this interface presents challenges. The control electronics for superconducting qubits must operate at room temperature while the qubits themselves sit at temperatures colder than outer space. Every wire connecting these two regimes is a potential source of noise.

Scaling also requires solving problems in cryogenic engineering, microwave electronics, and materials science simultaneously. The noise problem extends beyond the qubits themselves into every component of the system.

Companies making progress understand that qubit count is a vanity metric. IBM's roadmap focuses heavily on error rates and connectivity rather than raw numbers. Google's Willow processor emphasized error correction improvements over qubit scaling.

The path forward isn't obvious, and it's not guaranteed. Quantum computing might require entirely new physical implementations. Topological qubits, photonic systems, neutral atoms, and trapped ions all offer different tradeoffs. Each faces its own scaling challenges.

What we have now are laboratory instruments, not general-purpose computers. The gap between here and there is measured not in qubits added but in noise conquered.