Robots have had a depth perception problem for years. Not at range, where lidar and traditional stereo cameras work fine, but up close, where the actual work happens. The new StereoLabs ZED X Nano, launched this week by Ouster, is designed specifically to fix that gap.

The Close-Range Problem

Most depth sensors on the market were built for navigation or surveillance. They excel at telling a robot where walls are, or tracking objects across a room. But robotic manipulation requires something different: precise depth data at distances measured in centimeters, not meters. Pick-and-place operations, assembly tasks, surgical assistance. These applications demand accuracy where traditional systems fall apart.

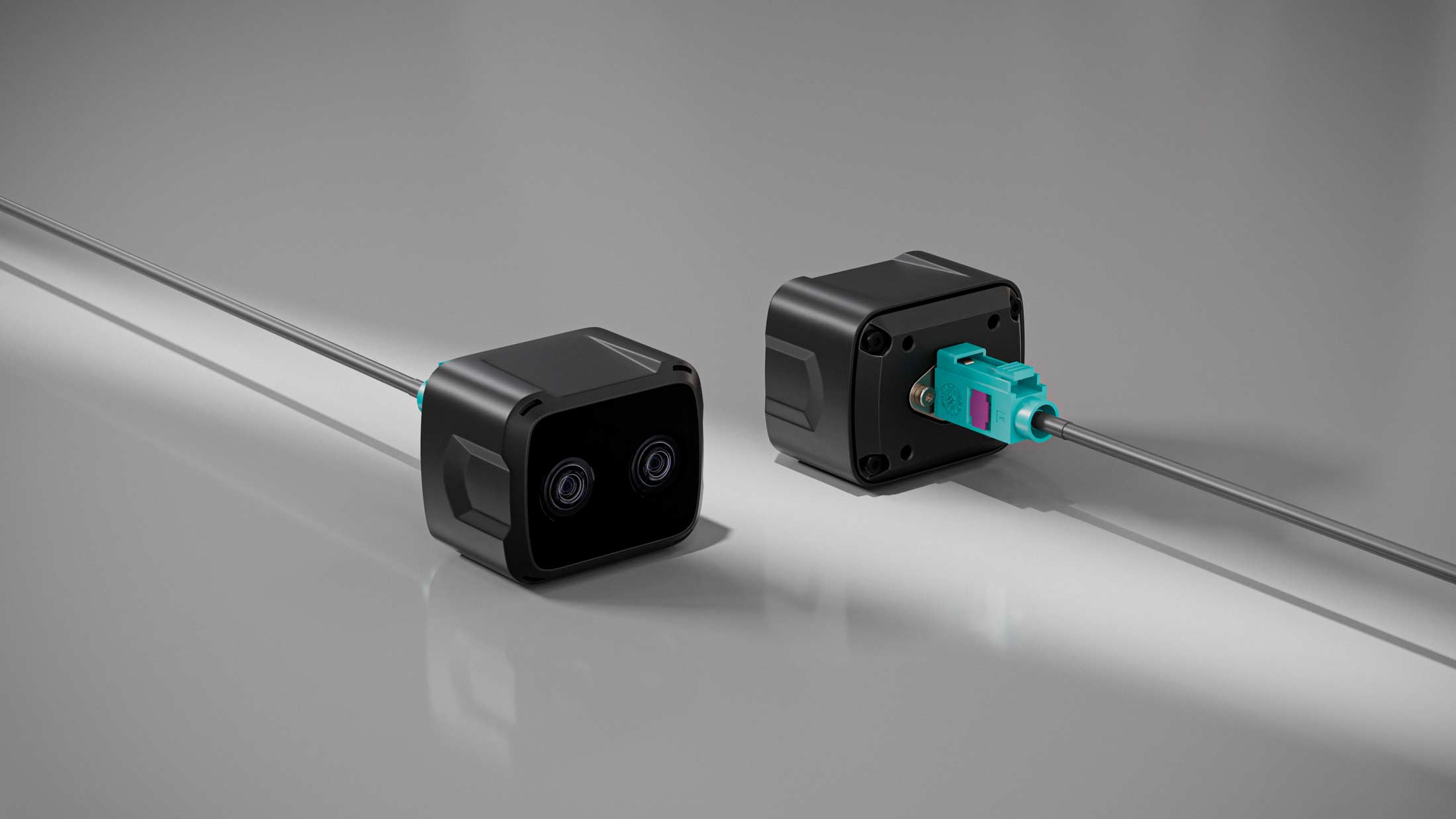

The ZED X Nano addresses this with a form factor small enough to mount on a robotic wrist. The specifications suggest a system optimized for exactly the working envelope where manipulators operate. According to Ouster, the camera delivers sub-millimeter depth accuracy within its optimal range, which could represent a meaningful improvement for physical AI applications that require fine motor coordination.

Why Stereo Vision Still Matters

The robotics industry has spent the past decade chasing various depth-sensing approaches. Time-of-flight sensors, structured light, and lidar have all found their niches. But stereo vision, the same approach human eyes use, retains advantages that matter for manipulation tasks.

Stereo cameras can operate in varied lighting conditions where active sensors struggle. They produce dense depth maps rather than sparse point clouds. And they scale down more gracefully than competing technologies. The challenge has always been baseline. Traditional stereo systems need physical separation between cameras to calculate depth, which limits how compact they can be while maintaining accuracy at close range.

StereoLabs appears to have engineered around this constraint with the Nano's design, though real-world performance will matter more than specifications. The company's existing ZED line has earned a solid reputation among robotics developers, which gives the new product some credibility by association.

The Physical AI Angle

This launch arrives at an interesting moment for robotics. Companies are racing to deploy systems capable of performing useful physical tasks in unstructured environments. Warehouses, hospitals, manufacturing floors. These settings demand robots that can handle objects they've never seen before, in lighting conditions that change by the hour.

The humanoid robotics push has highlighted how far the industry still needs to go on perception. Vision models have improved dramatically, but they need quality input data to work with. A camera that provides reliable depth information at manipulation distances could prove more valuable than marginal improvements in the AI models themselves.

Ouster's involvement also suggests broader distribution potential. The company has established relationships across the autonomous vehicle and industrial automation sectors, which could accelerate adoption beyond the research labs where StereoLabs products have traditionally found their audience.

What Remains to Be Seen

Hardware launches in robotics often look better on paper than in deployment. Integration challenges, calibration requirements, and edge-case failures have humbled many promising sensors. The ZED X Nano will face scrutiny from developers who have been burned before.

But the timing feels right. Robot manipulation has advanced to the point where perception has become the bottleneck for many applications. Foundation models for robotics are improving rapidly, but they need eyes that can actually see what's in front of them. A purpose-built solution for that specific problem, from a company with a track record in stereo vision, is the kind of infrastructure development the industry needs.

The ZED X Nano ships later this quarter, with pricing targeted at research and commercial robotics developers.