Alibaba's Qwen team dropped something interesting this week. On April 16, the company released Qwen3.6-35B-A3B under the Apache 2.0 license, making it one of the most capable open-source AI models available for commercial use. The technical approach matters: it's a sparse mixture-of-experts architecture with 35 billion total parameters but only 3 billion active at any given time.

That efficiency gap is the whole story. Running a 3B-active model costs a fraction of what a dense 35B model requires in compute, memory, and energy. Yet Alibaba claims this release matches the agentic coding performance of models running 10 times more active parameters.

The Benchmarks Tell a Clear Story

The numbers are worth examining. Qwen3.6-35B-A3B outperforms its predecessor Qwen3.5-35B-A3B across coding and agent tasks. It also beats the dense Qwen3.5-27B model despite running fewer active parameters during inference. On vision-language benchmarks, the results get more interesting: the model matches or exceeds Claude Sonnet 4.5 on several tests, a model from one of the best-funded AI labs in the world.

Spatial intelligence scores stand out. The model hit 92.0 on RefCOCO, a benchmark testing how well AI can locate objects based on natural language descriptions, and 50.8 on ODInW13, which measures object detection across diverse domains. These aren't marginal improvements. They suggest multimodal perception in open models is catching up to proprietary systems faster than most predicted.

Two Modes of Thinking

The model ships with what Alibaba calls thinking and non-thinking modes. The thinking mode allows the model to reason through complex problems step by step, useful for coding tasks or mathematical proofs. Non-thinking mode provides direct answers for straightforward queries. Users can toggle between them based on their needs.

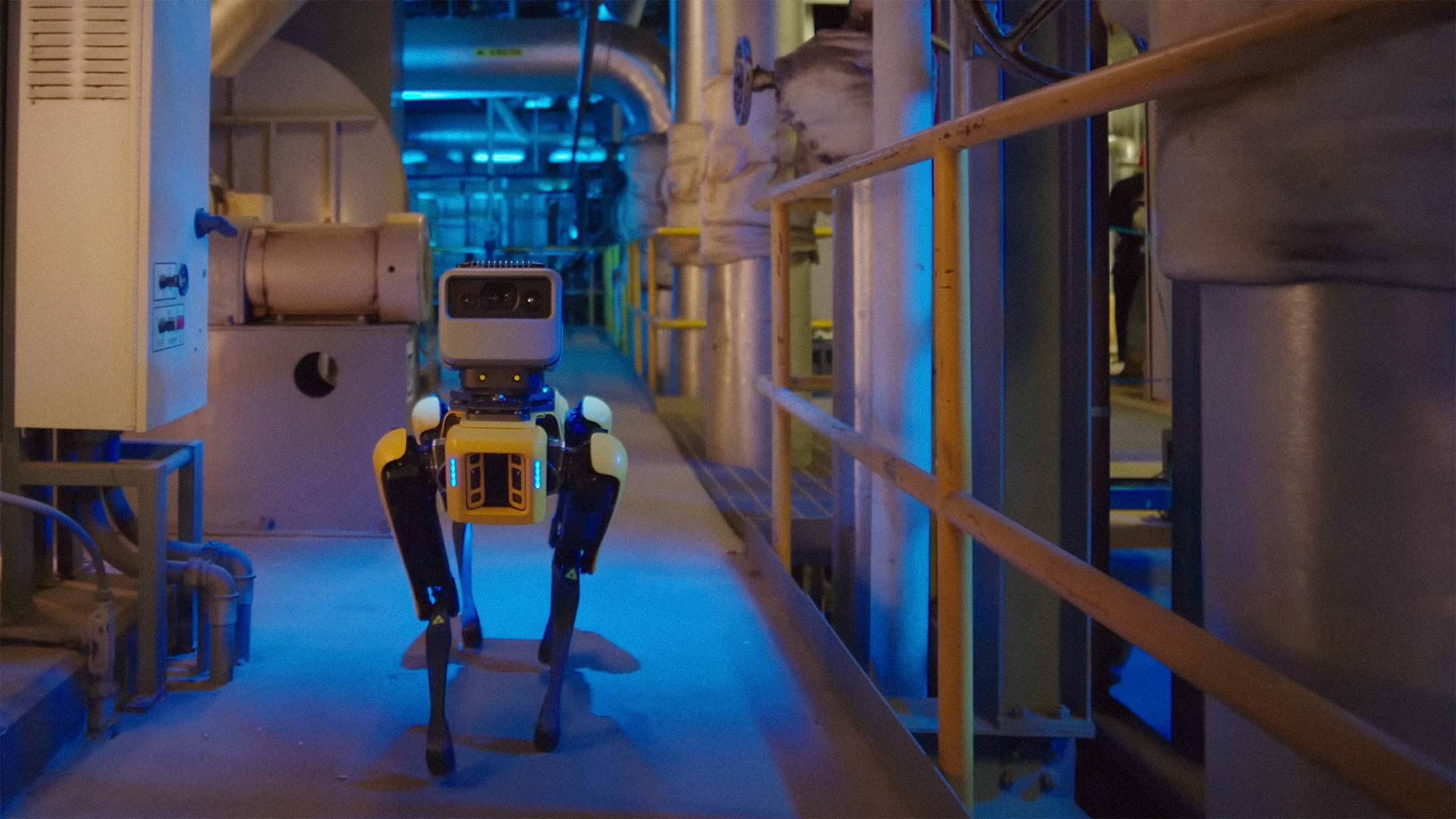

This flexibility matters for deployment. Agentic coding, where AI systems write, test, and iterate on code autonomously, requires different reasoning approaches depending on the task complexity. A model that can switch between deliberate reasoning and quick responses handles more real-world scenarios without requiring multiple specialized models.

Why Open Source Matters Here

The Apache 2.0 license is the permissive standard that lets anyone use, modify, and commercialize the model without restrictions. This isn't Alibaba being altruistic. Open-source releases build ecosystems, attract talent, and establish technical standards that benefit the releasing company over time. But the downstream effects for everyone else are real.

Researchers can study the architecture. Startups can build products without negotiating API access or worrying about rate limits. Developers in countries with unreliable cloud infrastructure can run capable models locally. The proliferation of AI capabilities across industries accelerates when the barrier to entry drops from millions in compute costs to a decent GPU.

There's a reasonable concern that open-sourcing powerful models creates risks. But the counterargument is stronger: concentrating AI capability in a handful of companies creates different risks, and probably worse ones. When capable models are widely available, more people can identify problems, build safeguards, and develop applications that serve diverse needs rather than just profitable ones.

Alibaba's release also puts pressure on competitors. Meta's Llama models have driven significant innovation precisely because they're open. Now Qwen3.6 raises the bar for what efficient, open multimodal models can do. Closed-model companies will need to demonstrate clearer advantages to justify their pricing.

The sparse MoE approach specifically suggests a path toward running sophisticated AI on consumer hardware. If 3B active parameters can match 30B dense performance, the hardware requirements for capable AI may plateau sooner than expected.